Axes of Planning (in AI Models)

Trying to somewhat decompose the term "planning"

Do language models Plan?

When people look at language models, and want to interpretability and evaluations to understand behavior, one natural question is to ask is whether the model is planning.

However, “planning” is a relatively vague concept that points at a few different things.

I try to walk though a bunch of examples that seem somehow related to aspects of “planning”, then try to divide planning into a few different axes.

Some related examples

Here considering a few different things that seem related to planning:

The model is writing something, and notices brings to it’s attention some fact that is not useful for the immediate next token prediction, but that may or may not be useful later.

The model realized it is finished with the current point, and gives an output that indicates it wants to move on to the next token (eg: paragraph ended, newline)

The model has read that it is supposed to move onto the next line, and has needs to start writing about the next thing.

The model has a vague outline of what the whole outline is going to say but hasn’t written it down.

The model some time reasoning about the best policy to use in a game, but within the game doesn’t deviate from the simple [observation] → [action] policy

The model has written the vague outline into a written outline of what it is going to say, and is now trying to follow the outline.

One model wants to go the the north pole, and each day goes north one mile as a result of this. The other robot doesn’t care about going to the north pole, but also just goes north one mile each day.1

The model does not have an outline of what it is going to say, and is just writing section-by-section based on what feels like the next heading or section to write

The model knows that the outputs need to eventually reach some end state X, and while outputting things considers things related to X more prominently.

The model has some end-state X it needs to reach, and has deduced it thus needs to do Y to reach end-state X, so writes about things to get to Y without writing this down explicitly

The model has some constraint over how some part of the output needs to look, and thus conditions some sooner outputs with this in mind.

The model has some explicit branching state in which it knows in order to reach the output Y or Z, it needs to either confirm or deny claim A, then based on this reaching Y or Z would be easy, but has yet to do this for A so does this first.

Some of these seem more related to “planning” than others. Can we try to break down the different aspects?

Breaking it down a bit

If one is thinking about the question “what kind of planning is the model doing?”, then the word planning can mean a lot of different things.

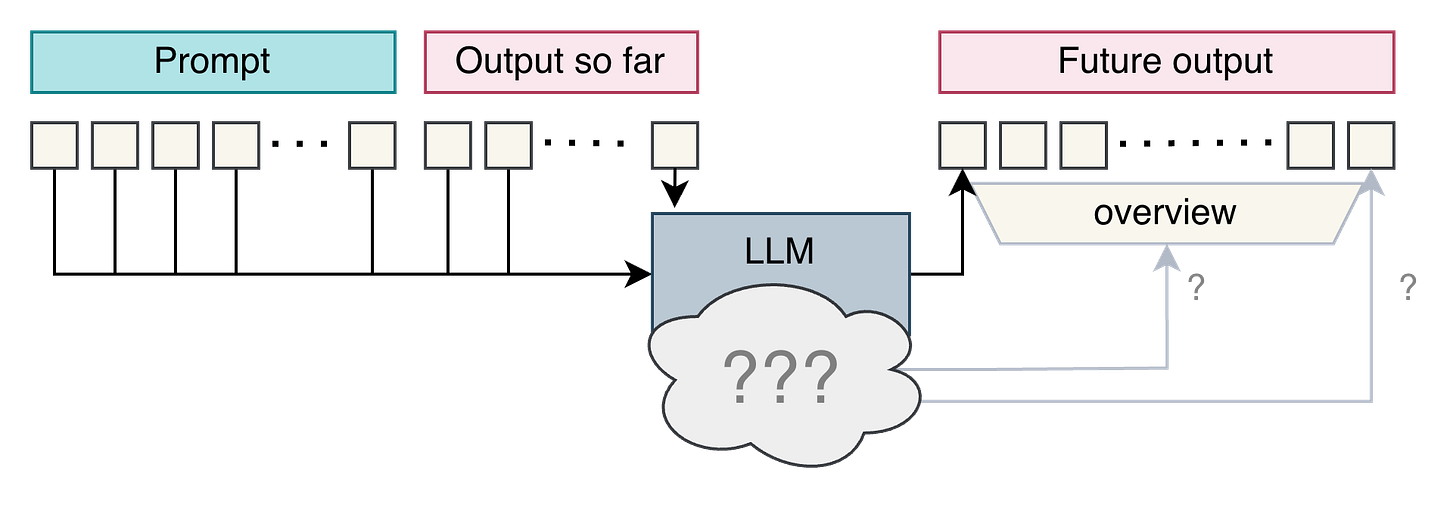

Time Horizon: How far ahead is the model representing what outputs might look like? The next token? The next phrase? The next sentence or paragraph? The next section? The whole output?

Vague vs Specific: How fine-grained is the model representing? Does it have a vague theme? Does it have a topic? Does it have an outline on the level of sections? Does it have specific sub-goals that it wants to achieve on a high level? Does it have a detailed plan on how it’s going to do those sub-goals?

Option space: Is the model having an idea of what the possible outcomes are. Are there many specific options being considered explicitly? Or one broad vague option? Or one narrow option, perhaps with some idea on what might cause the model to change course?

Forward Dependency/Constraints: To what extent is the forward looking shallow or nested? Were there a lot of constraints that meant it needed to prune the option space a lot? Is there much sequential dependence on the constraints? Or does it have some graph of steps that need to be completed in order before the end goal?

This bucket also has some questions on whether the planning is more “implicit” or “explicit”. That is, I would say there are qualitatively different kinds of ways one can “plan”

Implicit Planning: Saving useful information now or doing some computation on context, because it seems like kind of thing that might be used later. I guess in my mind this means things like “Oh, I have seen this topic before, here are some templates and structures I could follow”

Explicit Planning: Realizing that there are constraints about the future, and changing behavior now to condition on those later constraints. This could mean like

More explicit planning seems to be higher on the axis of forward dependency and following constraints.

Externalized vs Internalized: Sometimes people investigate a written outline of a plan, sometimes people investigate what is happening in activation space. These are pretty related but worth distinguishing.

Consistency: Does the model decide once what to do? Or does the model constantly re-evaluate what it wants to do at each step? The “most planning” seems maybe to have mostly a longer consistency plan, but being able to readjust too.

There may also be some other aspects I am missing, or that I might be confused about how some of these fit together.

There is some research in these directions, all labelled as being about “planning”

I will try to go over some papers in a paper review tomorrow.

looking fwd to the paper review :)